背景

在企业内经常遇到内部分享、培训等场景,但是如果都用外网直播的话,一堆人访问那么公网带宽肯定扛不住~

当然商业化的也有很多方案可以解决这个问题,但是动不动就是几十万,伤不起~

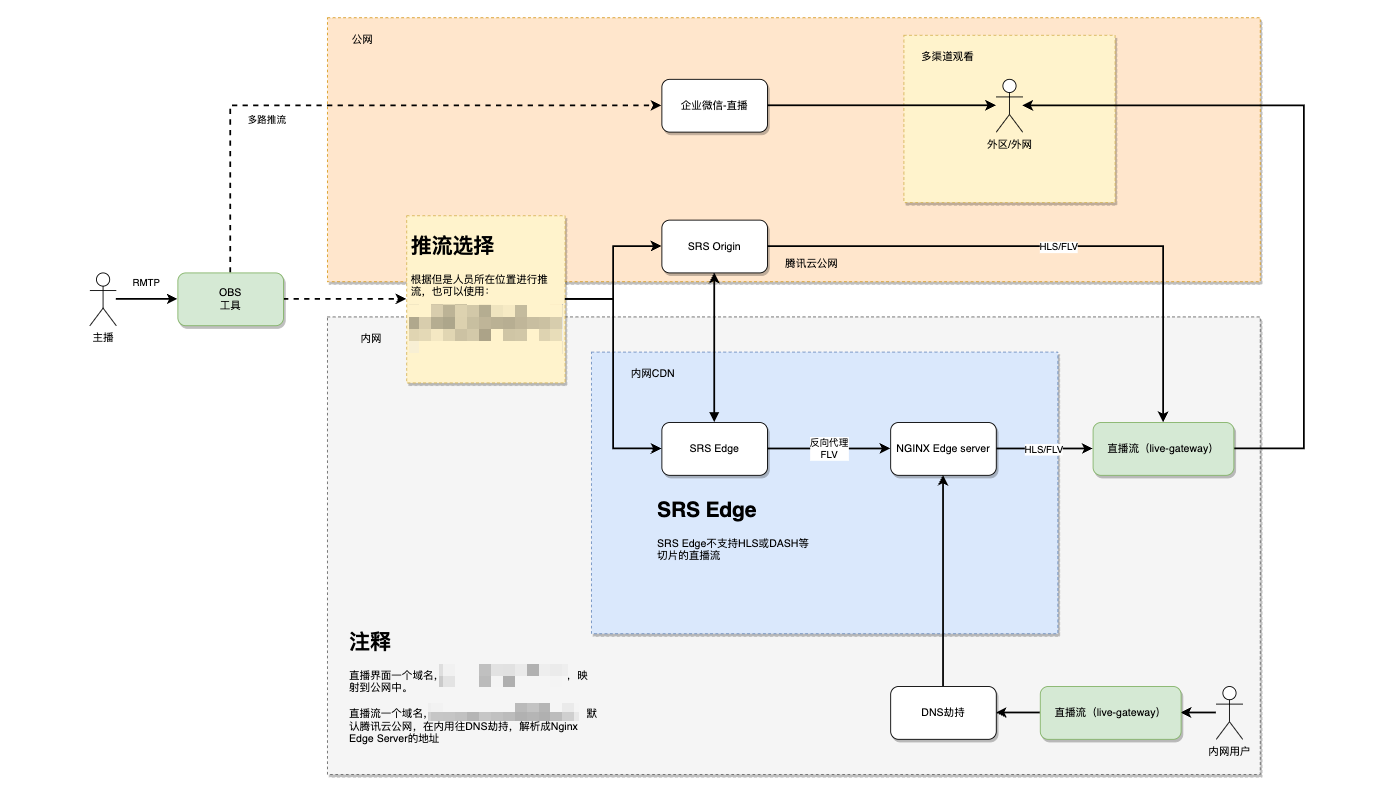

先看架构图

架构

简单的讲解下:

主播通过OBS工具,推到SRS的边缘节点(Edge)或者源节点(Origin)。当然单节点可以不是用Nginx,因为Nginx和Edge是同一个机器,起不到什么负载的情况。

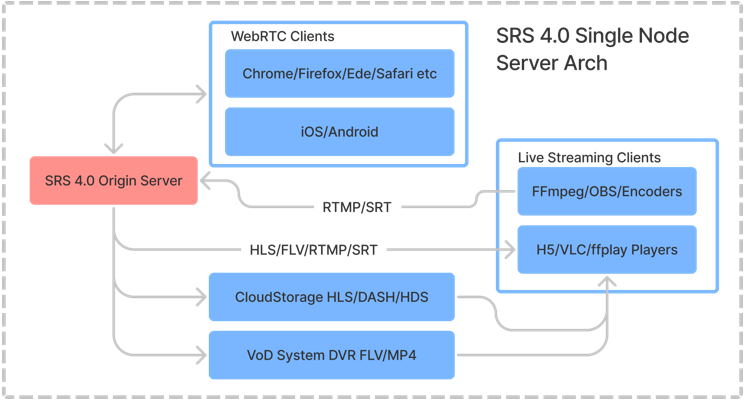

SRS官方介绍

部署介绍

我这边采用的是HTTP-FLV

边缘节点配置

配置文件

listen 1935;

max_connections 1000;

daemon on;

srs_log_tank console;

http_api {

enabled on;

listen 1985;

https {

enabled on;

listen 1990;

key ./custom/server.key;

cert ./custom/server.crt;

}

}

http_server {

enabled on;

listen 8080;

dir ./objs/nginx/html;

https {

enabled on;

listen 8088;

key ./custom/server.key;

cert ./custom/server.crt;

}

}

srt_server {

enabled on;

listen 10080;

maxbw 1000000000;

connect_timeout 4000;

peerlatency 0;

recvlatency 0;

latency 0;

tsbpdmode off;

tlpktdrop off;

sendbuf 2000000;

recvbuf 2000000;

}

# @doc https://github.com/ossrs/srs/issues/1147#issuecomment-577607026

vhost __defaultVhost__ {

cluster{

mode remote;

origin 10.152.9.173:1935;

}

http_remux {

enabled on;

mount [vhost]/[app]/[stream].flv;

}

hls {

enabled on;

hls_fragment 10;

hls_window 60;

hls_path ./objs/nginx/html;

hls_m3u8_file [app]/[stream].m3u8;

hls_ts_file [app]/[stream]-[seq].ts;

}

srt {

enabled on;

srt_to_rtmp on;

}

}

# For SRT to use vhost.

vhost srs.srt.com.cn {

}

stats {

network 0;

}docker-compose

version: '3'

services:

srs:

image: registry.cn-hangzhou.aliyuncs.com/ossrs/srs:5

volumes:

- /root/srs/srs.conf:/usr/local/srs/conf/srs.conf

- /root/srs/custom:/usr/local/srs/custom

# command: ./objs/srs -c conf/rtmp2rtc.conf

command: ./objs/srs -c conf/srs.conf

container_name: srs-jz

network_mode: my_custom_network

ports:

- "1935:1935"

- "1985:1985"

- "8080:8080"

- "8088:8088"

- "8000:8000/udp"

- "1990:1990"

- "10080:10080/udp"源节点配置

配置文件

listen 1935;

max_connections 1000;

daemon off;

srs_log_tank console;

http_api {

enabled on;

listen 1985;

https {

enabled on;

listen 1990;

key ./custom/server.key;

cert ./custom/server.crt;

}

}

http_server {

enabled on;

listen 8080;

dir ./objs/nginx/html;

https {

enabled on;

listen 8088;

key ./custom/server.key;

cert ./custom/server.crt;

}

}

# @doc https://github.com/ossrs/srs/issues/1147#issuecomment-577607026

vhost __defaultVhost__ {

http_remux {

enabled on;

mount [vhost]/[app]/[stream].flv;

}

hls {

enabled on;

hls_fragment 2;

hls_window 10;

hls_path ./objs/nginx/html;

hls_m3u8_file [app]/[stream].m3u8;

hls_ts_file [app]/[stream]-[seq].ts;

}

}

stats {

network 0;

}docker-compose

version: '3'

services:

srs:

image: registry.cn-hangzhou.aliyuncs.com/ossrs/srs:5

volumes:

- /data/srs/conf:/usr/local/srs/custom

command: ./objs/srs -c custom/srs.conf

container_name: srs-origin

ports:

- "1935:1935"

- "1985:1985"

- "8080:8080"

- "8088:8088"

- "8000:8000/udp"

- "1990:1990"

- "10080:10080/udp"

environment:

- CANDIDATE=xxx.xxx.xxx.xxxNginx Edge Server

缓存配置

路径:/etc/nginx/conf.d/srs-cache.conf

proxy_cache_path /data/nginx-cache levels=1:2 keys_zone=srs_cache:8m max_size=1000m inactive=600m;

proxy_temp_path /data/nginx-cache/tmp;

log_format main_cache '$upstream_cache_status $remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main_cache;转发配置

路径:/etc/nginx/default.d/srs-cache.conf

proxy_cache_valid 404 10s;

proxy_cache_lock on;

proxy_cache_lock_age 300s;

proxy_cache_lock_timeout 300s;

proxy_cache_min_uses 1;

location ~ /.+/.*\.(m3u8)$ {

proxy_set_header Host $host;

proxy_pass http://127.0.0.1:8080$request_uri;

proxy_cache srs_cache;

proxy_cache_key $scheme$proxy_host$uri$args;

proxy_cache_valid 200 302 10s;

add_header X-Cache-Status $upstream_cache_status;

}

location ~ /.+/.*\.(ts)$ {

proxy_set_header Host $host;

proxy_pass http://127.0.0.1:8080$request_uri;

proxy_cache srs_cache;

proxy_cache_key $scheme$proxy_host$uri;

proxy_cache_valid 200 302 3600s;

add_header X-Cache-Status $upstream_cache_status;

}

location ~ /.+/.*\.(flv)$ {

proxy_pass http://127.0.0.1:8080$request_uri;

}判断缓存是否生效

何判断缓存有没有生效呢?可以在NGINX日志中,加入一个字段upstream_cache_status,分析NGINX日志来判断缓存是否生效:

log_format main '$upstream_cache_status $remote_addr - $remote_user [$time_local] "$request" '

'$status $body_bytes_sent "$http_referer" '

'"$http_user_agent" "$http_x_forwarded_for"';

access_log /var/log/nginx/access.log main;第一个字段就是缓存状态,可以用下面的命令分析,比如只看TS文件的缓存情况:

cat /var/log/nginx/access.log | grep '.ts HTTP' \

| awk '{print $1}' | sort | uniq -c | sort -r可以看到哪些是HIT缓存了,就不会从SRS下载文件,而直接从NGINX获取文件了。

相关资料

开源方案:https://github.com/ossrs/srs

Edge Server:https://ossrs.io/lts/zh-cn/docs/v5/doc/edge

Nginx Edge Server:https://github.com/ossrs/oryx/tree/main/scripts/nginx-hls-cdn

页面播放器:https://h5player.bytedance.com/guide/#%E5%AE%89%E8%A3%85